If You Are Reluctant of Adopting A Dog: Here’s Why You Need to do It

If you feel hesitant to adopt a dog, there are several reasons to help not to feel this way anymore. Since bringing a dog

Welcome to Ydog blog, the only pet blog you will ever love !

In Ydog, we want to help you and improve your relation and education with your pets.

Please feel free to notify us if you have issues with our blog or our articles.

If you feel hesitant to adopt a dog, there are several reasons to help not to feel this way anymore. Since bringing a dog

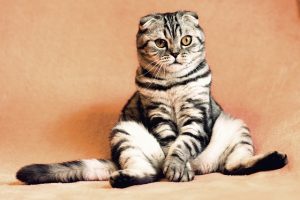

Having a cat at home can bring enormous joy, unlimited smiles, and happiness to your life. However, there are more reasons why you need

Bringing a kitten at home will undoubtedly bring a lot of joy to your life. However, before doing so, you need to prepare yourself

Undoubtedly, having a dog at home brings a lot of joy. However, a dog is a vulnerable animal. Therefore, before adopting a dog, there

There are many things you need to consider before adopting a cat. Indeed, bringing a cat can seem to be an easy task. However,

To improve your pet happiness and your too by the way !